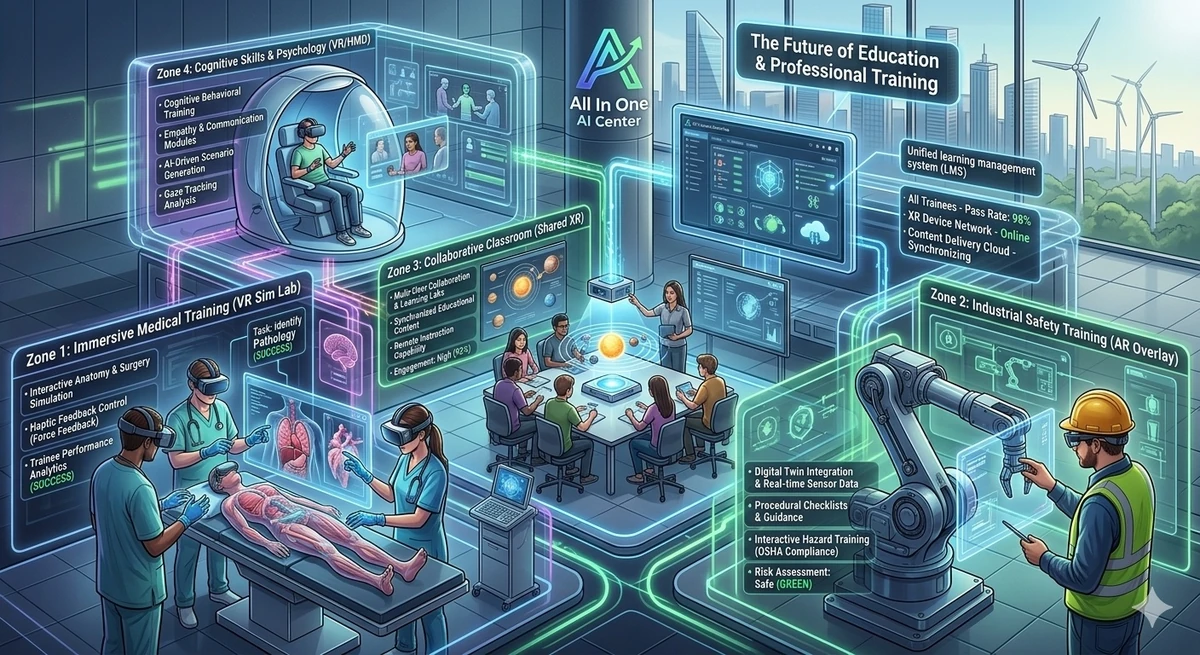

Project Overview

The Government Organisation in Abu Dhabi needed a modern, immersive onboarding experience for new government employees — one that could deliver orientation, guided learning journeys, and real-time collaboration in a way that traditional video conferencing and document-based onboarding simply cannot match.

I designed and delivered a full metaverse platform: a persistent virtual office environment where employees could navigate, attend guided onboarding sessions, collaborate in real time, and engage with interactive learning modules — all from VR headsets, mobile devices, or web browsers.

Multi-User Architecture with Photon

The most technically complex aspect of this project was the multi-user networking architecture. Unlike single-user VR experiences, a metaverse platform requires:

- Real-time avatar synchronisation — all users see each other's movements, gestures, and positions accurately

- Voice communication — spatial audio so users hear each other as if in the same physical space

- Shared interactive objects — presentation screens, whiteboards, and interactive modules that update for all users simultaneously

- Scalable room management — the ability to create, join, and manage virtual meeting spaces dynamically

I implemented all of this using Photon Unity Networking (PUN) combined with Photon Voice for spatial audio. The architecture needed to be robust enough for government use — consistent, secure, and reliable across varying network conditions.

Cross-Platform Delivery

Government employees access technology on very different devices. The platform needed to work on:

- Meta Quest VR headsets — for the full immersive experience

- Android and iOS mobile — for employees without VR hardware

- WebGL — for browser-based access on desktop and laptop computers

Maintaining a single Unity codebase across all three platforms while optimising performance for each was a significant engineering challenge. I used Unity's addressables system for platform-specific asset loading and maintained strict platform abstraction layers in the codebase.

Interactive Onboarding Modules

The learning journey was structured as a series of interactive modules that new employees completed in sequence:

- Virtual orientation tour — a guided walk through the virtual government office, meeting key departments

- Policy and compliance training — interactive scenarios covering government workplace policies

- Team introduction sessions — live multi-user events where new employees meet their teams in VR

- Resource and tool familiarisation — interactive demonstrations of internal systems and tools

Tech Stack

Key Outcomes

How AI Tools Contributed to This Build

A multi-user metaverse platform across three platforms — VR, mobile, and WebGL — is one of the more complex Unity projects in terms of codebase scope. AI tools helped at multiple stages.

ChatGPT for Unity networking code — Photon PUN has extensive API surface area and the patterns for room management, player instantiation, and state synchronisation across platforms have specific quirks. ChatGPT was useful for generating correct Photon PUN boilerplate and debugging synchronisation issues, particularly around the addressables system for platform-specific asset loading. Two years of experience with ChatGPT for Unity meant I knew how to frame networking questions to get useful starting points quickly.

Claude for cross-platform architecture decisions — the challenge of maintaining a single codebase across Quest standalone, mobile, and WebGL while keeping platform-specific optimisation sensible required architectural decisions with broad implications. Claude was useful for thinking through the abstraction layer structure — specifically how to separate platform-specific interaction code from shared game logic without creating maintenance problems as the platform requirements diverged over the project.

Documentation and client communication — government projects involve extensive stakeholder documentation. ChatGPT significantly reduced the time spent on technical specification documents, UAT testing plans, and user guides. Describing the feature and asking for a structured first draft, then editing for accuracy and tone, was consistently faster than writing from scratch.

Lessons Learned

- Government clients require extensive UAT cycles — plan for significantly more stakeholder review rounds than enterprise clients. Every detail matters when the end users are government employees.

- Cross-platform parity is harder than it looks — features that work perfectly on Quest often need significant redesign for WebGL and mobile. Budget significant time for platform-specific adaptation.

- Spatial audio is essential for metaverse engagement — without believable spatial voice communication, multi-user VR feels flat and users disengage quickly. Photon Voice with proper HRTF configuration made a huge difference.

- Content design is as important as technical delivery — the onboarding modules needed careful instructional design to be effective. Technical excellence alone doesn't make training work.

Frequently Asked Questions

What is a metaverse onboarding platform?

A metaverse onboarding platform is a persistent virtual environment where new employees complete their organisational orientation and training. Unlike video calls or document-based onboarding, it provides an immersive, interactive experience where employees can explore virtual offices, attend guided sessions, and collaborate with colleagues in real time — from VR headsets, mobile devices, or web browsers.

What networking solution works best for multi-user VR?

Photon Unity Networking (PUN) combined with Photon Voice is the most mature and widely-used solution for multi-user VR in Unity. It handles room management, state synchronisation, and voice communication with good reliability and reasonable latency. For larger scale deployments, Mirror Networking or custom WebSocket solutions may be more appropriate.